A 4-person marketing agency in Austin spent two full days last week trying to get Serper.dev to spit out emails from Google Maps listings. Forty-eight hours. What they got: a CSV with business names, addresses, and phone numbers. Emails? Zero. Social profiles? Nope.

That story pretty much tells you everything about this Google Maps scraper comparison. But there's nuance worth digging into, so bear with me.

The web scraping industry blew past $1 billion in 2025, and analysts at The Business Research Company expect it to hit $2.28 billion by 2030 — an 18.2% CAGR that nobody's ignoring. Contact scraping from platforms like Google Maps is a big chunk of that growth, particularly in advertising, media, and financial services where building fresh lead databases matters more than recycling the same stale lists everyone else bought from the same broker three years ago.

We tested both tools on the same queries. Five criteria: interface, data depth, pricing, scalability, and geo-targeting.

Video: Serper.dev vs Scrap.io — Tutorial & Comparison

What's Inside

- Quick Verdict: Serper.dev vs Scrap.io at a Glance

- Understanding Serper.dev

- Scalability: Where Serper.dev Hits Its Limits

- Scrap.io: The No-Code Alternative

- Side-by-Side Comparison: Data Fields, Features, Output

- Pricing Comparison: Serper.dev vs Scrap.io in 2026

- Geo-Targeting: Radius & Polygon Selection

- When to Choose Serper.dev (And When to Choose Scrap.io)

- FAQ: Serper Dev, Google Maps Scrapers & Alternatives

- The Bottom Line

Quick Verdict: Serper.dev vs Scrap.io at a Glance

If you've got 30 seconds and just want the answer, here it is.

| Feature | Serper.dev | Scrap.io |

|---|---|---|

| Interface | API playground + Python | No-code web dashboard |

| Data fields per lead | ~20 (Maps-native only) | 70+ (Maps + website enrichment) |

| Email extraction | ❌ Nope | ✅ Built-in |

| Social media profiles | ❌ Nope | ✅ Facebook, Instagram, LinkedIn, YouTube, Twitter |

| Pricing | Pay-as-you-go ($50 / 50K credits) | From $49/mo monthly, or $35/mo billed yearly |

| Scalability | Code your own loops | One-click, country-wide |

| Geo-targeting | Manual GPS coordinates | Radius circle or custom polygon |

| No-code | ❌ Python required | ✅ Zero setup |

| Countries | Wherever Google Maps works | 195 countries, 4,000+ categories |

Serper dev is a SERP API that happens to cover Google Maps. Scrap.io is a Google Maps data scraper — a specialized business data scraper built from the ground up for lead gen. Two completely different tools that just happen to overlap on one use case.

Understanding Serper.dev

So what actually is Serper.dev? It's a SERP API. You send it a query, it returns structured Google results. Web search, images, videos, news, shopping, places, maps, reviews, scholar, patents, autocomplete. Pretty much every Google product you can think of, delivered as clean JSON in 1-2 seconds. The speed is legitimately impressive — most competitors are 2-3x slower.

For Google Maps specifically, here's the workflow: you type a query (like "real estate agency New York"), punch in latitude/longitude coordinates and a zoom level, and the serper api fires back results. The playground on their site makes testing easy enough.

Why Google Maps for Lead Gen?

Quick detour for anyone wondering why we're even talking about Google Maps as a lead source. Over 200 million businesses indexed. 195 countries. Every listing is public — no login wall, no scraping restrictions like LinkedIn or Facebook impose. You want every roofing company in Dallas or every café in Berlin? It's all sitting there.

According to Straits Research, contact scraping ranks among the top use cases for web scraping tools in 2025, especially for lead gen teams in advertising and media.

Setting Up a Query

Sign up on serper.dev, grab an API key, open the playground. Type your search. Set location through lat/long coordinates — which means opening Google Maps in another tab, clicking around to find the right spot, and copying numbers. Pick your language. Choose page count.

Click search. JSON appears.

If you've built anything with APIs before, this feels familiar. If you haven't, you're staring at a wall of curly braces wondering what happened. There's a learning curve, and I won't pretend there isn't.

What Data Fields Does Serper.dev Return?

This is where things get interesting — or disappointing, depending on what you need.

| Data Field | Serper.dev | Scrap.io |

|---|---|---|

| Business name | ✅ | ✅ |

| Address | ✅ | ✅ |

| Phone number | ✅ | ✅ |

| Website URL | ✅ | ✅ |

| Rating & review count | ✅ | ✅ |

| Business category + subtypes | ✅ | ✅ |

| Lat / Long | ✅ | ✅ |

| Opening hours | ✅ | ✅ |

| CID / FID / Place ID | ✅ | ✅ |

| Email address | ❌ | ✅ |

| Social media links | ❌ | ✅ |

| Website technologies | ❌ | ✅ |

| Ad pixels (FB Ads, GA) | ❌ | ✅ |

| Contact form URLs | ❌ | ✅ |

Everything Google Maps displays natively? Serper nails it. Everything that requires actually visiting the business's website — emails, Instagram handles, tech stack, whether they run Facebook Ads? Missing. Completely.

That's not a bug, it's just not what Serper was designed for. But if you're building a cold outreach list, a business name without an email is basically useless. (If phone numbers are your priority, check out our phone number scraping tutorial.)

From Playground to Python

Once your query works in the playground, you'll move to Python to automate things. Copy the code, set up your dev environment, pip install requests, run the script. Standard stuff. It works.

Where it gets annoying: GPS coordinates off by one decimal? Wrong city. Pagination logic slightly broken? Duplicate leads everywhere. And debugging API responses at 11pm because a client wants the list by morning... yeah. Been there. For a deep dive into the technical side, our complete Google Maps scraping guide covers all the methods.

Exporting Data

Serper returns JSON. You convert to CSV yourself with pandas or whatever library you like. Each call gives about 20 results. So 200 leads = 10 API calls, manual pagination, output merging. Not hard, just tedious.

Scalability: Where Serper.dev Hits Its Limits

Twenty leads from one city? Easy. A hundred thousand leads across 50 US states? Different game entirely.

Scaling with Serper dev means writing loops. Loop through pages for pagination. Loop through cities — but wait, each city needs its own lat/long, so now you need a coordinates lookup table. Loop through categories if you want dentists AND plumbers AND lawyers. Your nice little script just became a small engineering project.

One Hacker News user flagged the math on this: at $2 per 1,000 queries for 100 results each, costs stack up fast when you're scanning multiple regions. And you still don't have emails after all that.

It's absolutely doable. But "doable with two days of Python" and "ready for a sales team by Friday afternoon" are not the same thing. Our DIY vs professional scraping comparison breaks down exactly when it makes sense to code it yourself versus using a ready-made tool.

Scrap.io: The No-Code Alternative

Scrap.io does one thing. Extracts business data from Google Maps. No web search API, no scholar results, no image search. Just Google Maps lead generation, done properly.

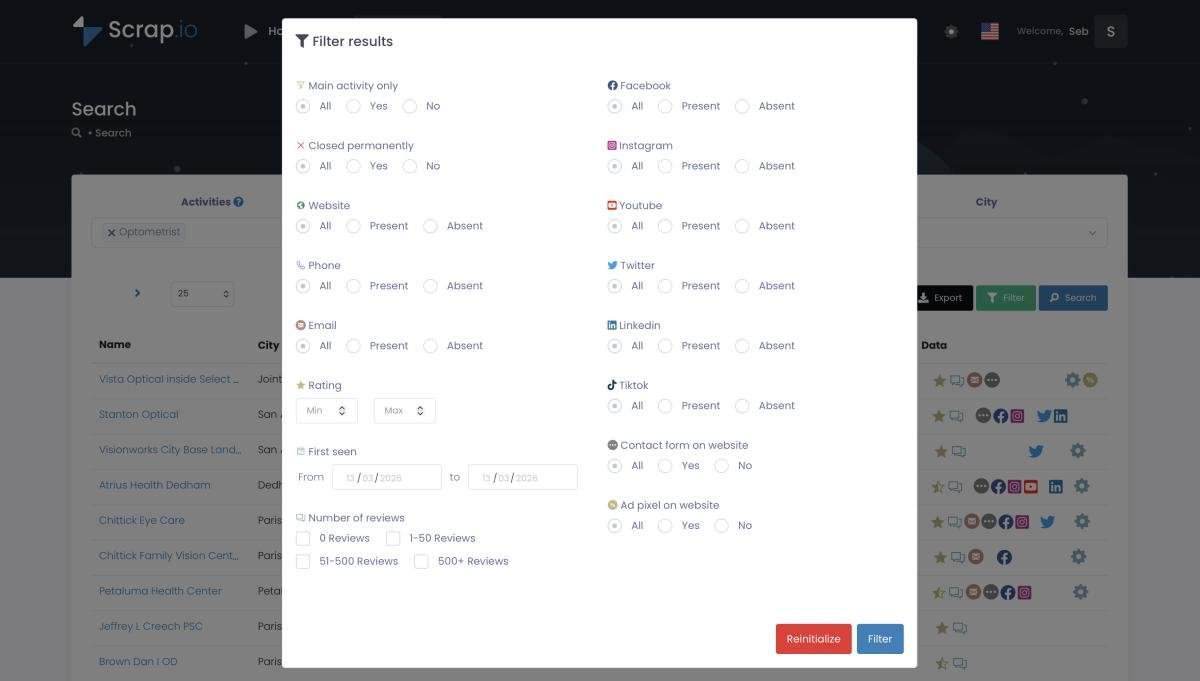

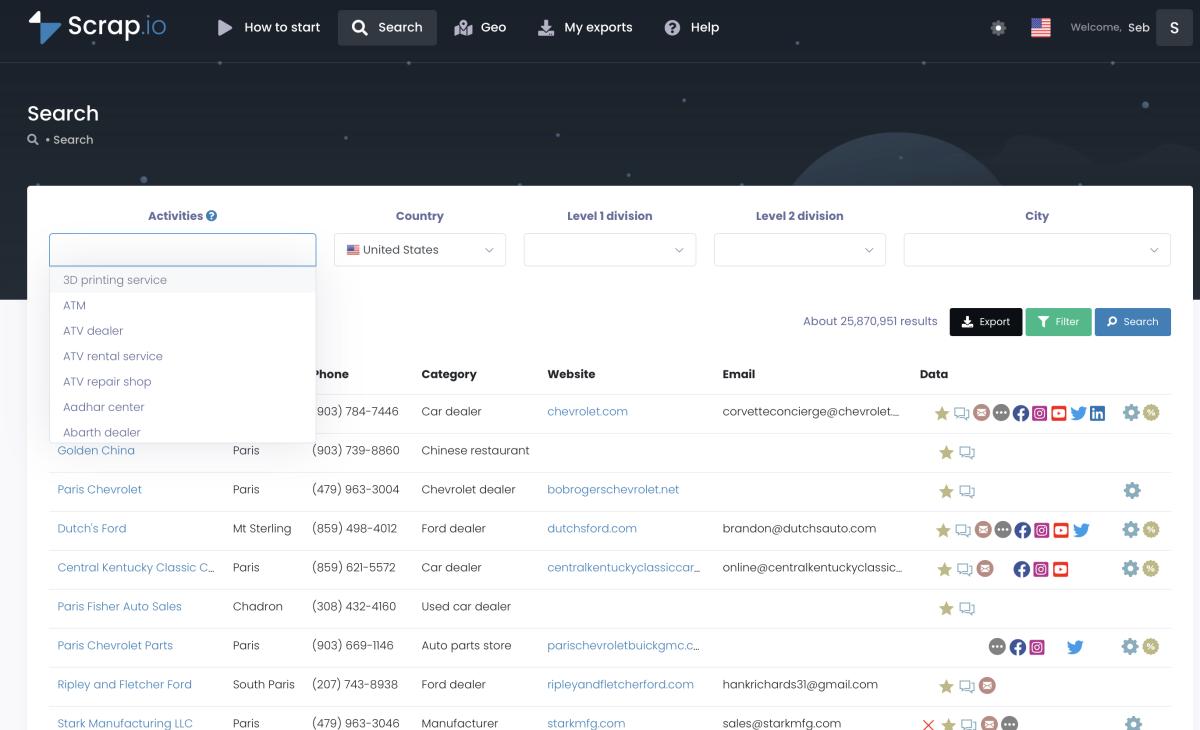

Log in. Pick a category from 4,000+ options — or leave it blank and pull everything in a given area. Select your location: country, state, county, or city. Hit search. See a preview with an exact result count. Filter if you want (only businesses with emails, only those with 10+ reviews, only currently open — there are 17 filters total). Export to CSV or Excel.

Scrap.io's 17 filters let you export only the leads that matter — no junk data.

No code. No API keys. No virtual environment. No stack traces.

Scrap.io search interface: pick a category, select a location, get results instantly.

The output? Seventy columns. Not a typo. Business name, address, phone — yes, all the basics. But also: email addresses (up to 10 per business), Facebook page, Instagram, LinkedIn, YouTube, Twitter, contact form URLs, website meta title, meta description, website generator (WordPress? Wix? Shopify?), ad pixels, and more.

One of our users pulled 200,000 US restaurants with a Facebook account. Single export. Took a while to process, obviously — that's a fat file. But the point is he didn't write a single line of code to do it.

If you're exploring the competitive landscape more broadly, we've also done head-to-head comparisons with Apify, Bright Data, Octoparse, and OutScraper.

Want to see those 70 data fields yourself? Start your free trial on Scrap.io — 100 leads included. Enough to run your own test.

Side-by-Side Comparison: Data Fields, Features, Output

OK. This is probably the section you scrolled straight to. Fair enough.

I ran both tools on the same query: real estate agencies in New York. Same day, same search terms.

The Data Gap

Serper.dev returned solid basics. Names, addresses, phone numbers, websites, ratings, coordinates, hours, category tags. Clean data, well-structured. No complaints on quality.

Scrap.io returned all that — plus email addresses, secondary emails (some businesses list different ones on different pages of their site), social media across five platforms, contact form URLs, website title, meta keywords, the CMS they use, whether they run paid ads on Facebook or Google, and a bunch more. If a business has a website, Scrap.io crawls it. That's the difference.

For cold outreach, this gap is massive. A list with names and addresses is a starting point. A list with emails, LinkedIn profiles, and the knowledge that they already run Facebook Ads? That's a campaign ready to launch. (More on scraping emails from Google Maps if that's your main goal.)

Feature-by-Feature

| What you need | Serper.dev | Scrap.io |

|---|---|---|

| Name, address, phone | ✅ | ✅ |

| Email addresses | ❌ | ✅ (up to 10 per business) |

| Social profiles | ❌ | ✅ (5 platforms) |

| Website tech stack | ❌ | ✅ |

| Pre-export filtering | ❌ | ✅ (17 filters) |

| Export format | JSON → manual CSV | CSV or Excel, one click |

| Results per query | ~20 per page | Full dataset, no ceiling |

| Multi-city extraction | Code it yourself | Built-in location hierarchy |

| Chrome extension | ❌ | ✅ (Maps Connect) |

| Has an API | ✅ (core product) | ✅ (also available) |

Ready to test it? Start your Scrap.io free trial and export 100 Google Maps leads with the full 70+ data columns.

Pricing Comparison: Serper.dev vs Scrap.io in 2026

Money talk. This is where most comparisons get vague. I'll be specific.

Serper.dev charges pay-as-you-go. Drop $50, get 50,000 API credits. That's roughly $1 per 1,000 queries at the entry tier, scaling down to about $0.30 per 1,000 at bulk volume. Credits expire after 6 months — kind of annoying but manageable. Also: 2,500 free queries to test. (Check their pricing page for the latest.)

The catch? Each Maps query gives ~20 results. And none of those results include email. So if you need emails, you're paying for a separate enrichment tool on top. Hunter.io, Snov.io, whatever — that's easily another $50-100/month.

Scrap.io runs monthly plans. Here's what they look like right now:

| Plan | Monthly billing | Yearly billing | Export credits/mo | Search scope |

|---|---|---|---|---|

| Basic | $49/mo | $35/mo | 10,000 | City |

| Professional | $99/mo | $69/mo | 20,000 | County |

| Agency | $199/mo | $139/mo | 40,000 | State |

| Company | $499/mo | $350/mo | 100,000 | Entire country |

Every export comes with the full 70+ columns. Emails, socials, tech data — all included, no add-ons.

What Does a Lead Actually Cost You?

| Scale | Serper.dev (no emails) | Scrap.io (full data) |

|---|---|---|

| 100 leads | ~$0.50 + enrichment | Free trial |

| 1,000 leads | ~$5 + enrichment ($30-50) | $49/mo or $35/mo yearly |

| 10,000 leads | ~$50 + enrichment ($50-100) | $49/mo or $35/mo yearly |

| 100,000 leads | ~$500 + serious enrichment cost | $499/mo or $350/mo yearly |

The serper dev pricing looks cheap in isolation. Factor in the cost of actually making those leads usable for outreach — the email enrichment, the data cleaning, the time spent coding — and the math shifts pretty hard.

Geo-Targeting: Radius & Polygon Selection

Most people don't think about geo-targeting until they need to scrape "every pizza place within 3 miles of this intersection" or "all businesses inside this specific commercial district but NOT the residential streets around it." Then it becomes urgent.

Serper.dev: GPS coordinates. Period. You type in a latitude, longitude, and zoom level. Want a different area? New coordinates. Want to cover a state? Build a coordinate grid and query each point separately. Works, but it's manual labor disguised as automation.

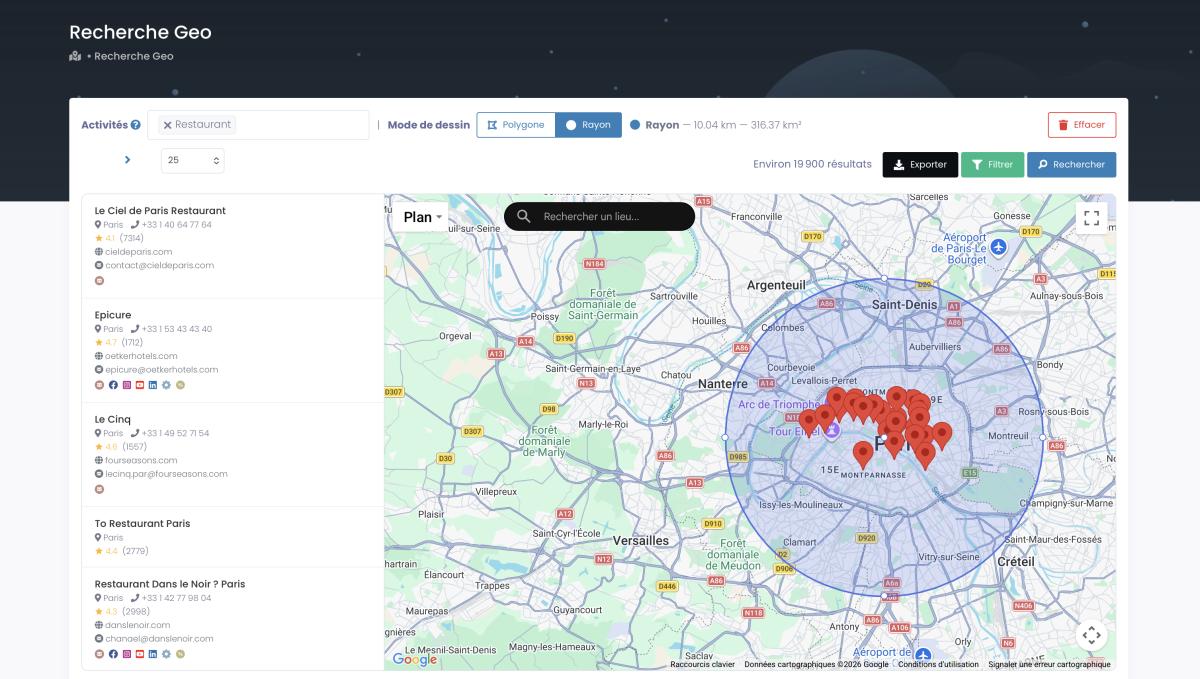

Scrap.io gives you three options, and the third one is the killer feature nobody else offers:

- Administrative divisions — country, state, county, city. No coordinates needed.

- Radius selection — drop a pin, draw a circle. Every restaurant within 5km of downtown Nashville? Done.

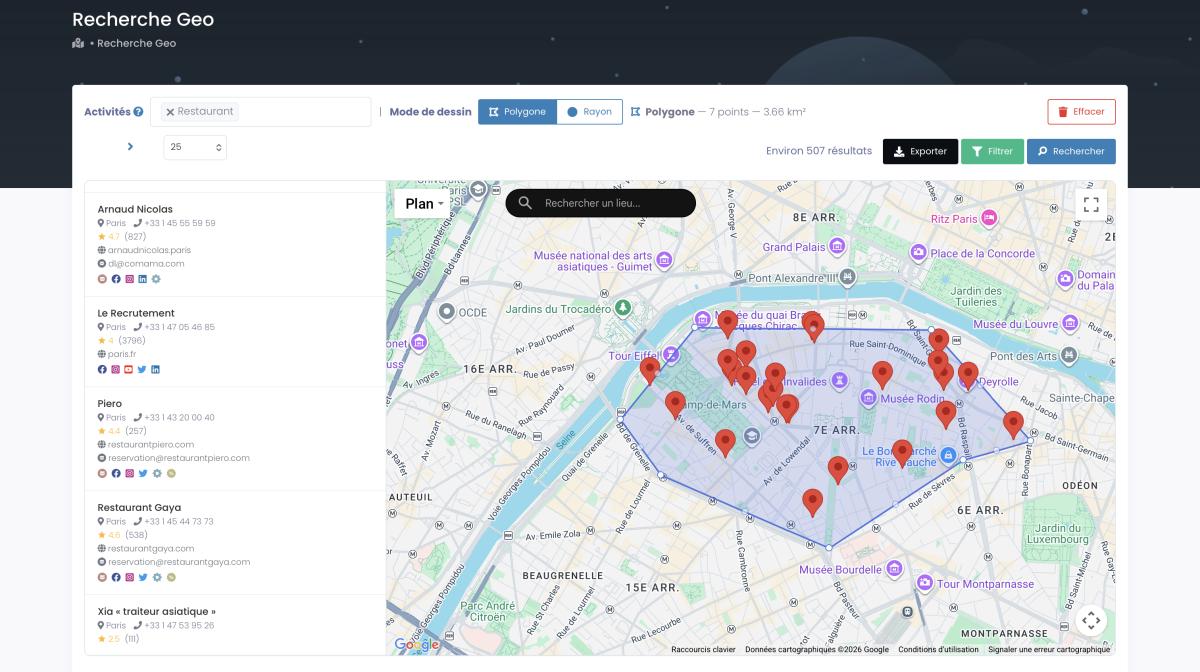

- Polygon drawing — trace a custom shape around any zone on the map. A commercial district, a specific neighborhood, a stretch of highway with strip malls.

Radius selection: drop a pin, set a distance, and Scrap.io pulls every business inside the circle.

Polygon selection: trace a custom shape around any neighborhood or commercial district.

That polygon feature is the reason agencies with local clients end up on Scrap.io. You can't replicate it with GPS coordinates in Serper.dev, not without building your own geometry engine. For more on this, our geomarketing guide and our piece on scraping densely populated areas go deep.

When to Choose Serper.dev (And When to Choose Scrap.io)

Honest take. No tool is right for every situation.

Serper.dev makes sense when:

- You're a developer building something that needs Google SERP data across multiple products (web, images, news, maps, shopping, scholar)

- Google Maps is just one data source in a larger pipeline and the serper api handles the data extraction layer

- You need a lightweight, fast SERP API for an integration

- Email extraction and social data genuinely don't matter for your use case

Scrap.io makes sense when:

- You specifically need Google Maps leads — with emails, phones, social profiles, and enrichment data

- You're not a developer, or you don't feel like spending a weekend writing Python for something that should take five minutes

- Scale matters: entire cities, states, countries

- Geo-targeting by radius or polygon would save you hours

- You want a no-code Google Maps scraper that just works out of the box

For more comparison angles: D7 Lead Finder vs Scrap.io, LeadStal vs Scrap.io, PhantomBuster alternative.

FAQ: Serper Dev, Google Maps Scrapers & Alternatives

What is Serper.dev?

A SERP API for developers. You send it a search query, it returns structured Google results as JSON — web, images, maps, news, shopping, whatever. For Google Maps, it returns business listings with basic fields (name, phone, address, rating). No email extraction. Pricing starts at $50 for 50,000 queries. Fast — 1-2 second response times. Not a lead gen tool per se, more of an infrastructure component for people building their own.

Is Serper.dev legit?

Totally. It's a well-established SERP API with integrations in CrewAI, LangChain, and a bunch of AI agent frameworks. Developer community likes it for its speed-to-cost ratio. Just know what you're buying: raw search data, not ready-made lead lists.

What is the best Serper dev alternative for Google Maps scraping?

Depends what you mean by "alternative." If you want another SERP API, look at SerpAPI, SearchAPI, or DataForSEO. If you want a tool specifically for extracting Google Maps leads with emails and social data — which is usually what people mean when they Google "serper dev alternative" — that's Scrap.io. Purpose-built for that exact job. Our complete Google Maps scraping guide covers all the options.

How much does Serper.dev cost?

Pay-as-you-go: $50 gets you 50,000 credits. Works out to about $1 per 1,000 queries at the base tier, dropping to roughly $0.30/1K at volume. 2,500 free queries to start. Credits last 6 months. Remember this is raw API cost — you'll spend more if you need email enrichment from another service.

What's the best Google Maps scraper in 2026?

For developers wanting API-level control across Google products: Serper.dev or SerpAPI. For non-technical users wanting leads with full contact data: Scrap.io. For small-scale extractions on a budget: Chrome extensions. For maximum control at maximum cost: Google Places API at $32-40 per 1,000 requests.

Is scraping Google Maps legal?

Short answer: scraping publicly available business data is generally legal. The hiQ v. LinkedIn ruling established that accessing public data doesn't violate the CFAA. Google's ToS technically prohibit automated extraction, though enforcement is mostly targeted at aggressive, high-volume abuse rather than standard business data collection. GDPR adds requirements if you're processing EU personal data. Both Serper.dev and Scrap.io extract only publicly available business information. For the full picture, read our article on the legal aspects of scraping Google Maps.

How do I actually scrape Google Maps?

Four main approaches: SERP APIs like Serper.dev (developer-focused, code required), no-code platforms like Scrap.io (search-filter-export), Chrome extensions (free but limited to ~120 results), or the official Google Places API (powerful but expensive). For most people, Scrap.io is the fastest path from "I need leads" to "here's my spreadsheet."

What data can you pull from Google Maps?

The basics: business name, address, phone, website, ratings, reviews, hours, coordinates, categories. Advanced tools like Scrap.io add: email addresses, social media profiles across five platforms, website technologies, ad pixels, contact form URLs, and SEO metadata — up to 70+ data columns per listing. For coordinate-specific extraction, our coordinates guide covers the details.

How many leads can I get from these tools?

Serper.dev: limited by your API budget and coding effort. Realistically hundreds to a few thousand per session unless you build a serious pipeline. Scrap.io: we've seen single exports hit 200,000+ leads. Country-level extraction is built into the Company plan. The filtering guide helps you avoid exporting junk.

The Bottom Line

Both tools work. I'm not going to pretend Serper.dev is bad — it isn't. For what it does (fast, cheap SERP data across all Google products), it's one of the best options out there.

But for Google Maps lead generation? The comparison isn't close.

Serper gives you ~20 data fields. No emails. No social links. No website tech data. You code everything — pagination, multi-city logic, export formatting. And then you still need to buy another tool to get contact information.

Scrap.io gives you 70+ fields with emails and social profiles included. No code. As a Google Maps extractor, it offers geo-targeting by radius or polygon that Serper can't match at any price. And the data extraction scales from a single ZIP code to an entire country without changing anything except a dropdown.

Three things that matter: data depth (70 vs 20 fields), email extraction (built-in vs buy-it-elsewhere), usability (click a button vs write a script).

For automating what comes after the export — feeding leads into your CRM, personalizing outreach — check out the Make.com automation tutorial and the CRM enrichment guide. And if you want emails visible while browsing Google Maps, the free Maps Connect Chrome extension does exactly that.

Oh, and if you've been targeting real estate professionals specifically — we've got a dedicated playbook for that too. Also worth reading: our breakdown of AI-powered cold email personalization for local businesses.

Ready to generate leads from Google Maps?

Try Scrap.io for free for 7 days.